“Cutout” is one of those problems that looks trivial on the surface — just remove the background, right? — but, as anyone who has fought with strands of hair or a glass cup can tell you, it is one of the hardest pixel-level tasks in computer vision.

I’m a CV researcher at LibAI Lab working on image matting and generative models. Our team incubates Cutout.Pro, an AI image-editing platform used by 25k+ businesses for exactly this problem. In this post I want to demystify how AI cutout actually works, where the hard cases live, and walk through how to remove the background of any image in under a minute.

Table of contents

- What is “image cutout”?

- Why background removal is hard

- How modern AI cutout works

- Step-by-step: removing a background with Cutout.Pro

- Pro tips for better cutouts

- Common use cases

- FAQ

What is “image cutout”?

In academic terms, what most people call “cutout” is the union of two related problems:

- Image segmentation — for every pixel, decide whether it belongs to the foreground or the background. The output is a binary mask

{0, 1}. - Image matting — for every pixel, predict a continuous alpha value

α ∈ [0, 1]that represents how much of that pixel belongs to the foreground. The output is a soft alpha matte that can preserve hair, fur, motion blur and translucent objects.

In product terms, “cutout”, “background remover”, “bg eraser”, “transparent PNG maker” are all the same idea: isolate the subject of an image and throw away the background. A high-quality cutout looks something like:

Original photo ──► α-matte ──► Foreground × α + new BG × (1 − α)

That last formula is the compositing equation every CV researcher has seared into their brain. It’s the reason a great cutout can be dropped onto any new background and still look photoreal.

If you want to play with this right now, the easiest entry point is the free Cutout.Pro Background Remover — it implements exactly this pipeline behind a one-click UI.

Why background removal is hard

It is tempting to think “color thresholding + a bit of edge detection” should be enough. Anyone who has tried it knows it isn’t. Real photos break naive cutouts in at least four ways:

| Failure mode | Why it breaks classical methods |

|---|---|

| Hair / fur / fluff | Thousands of sub-pixel strands; no clean edge to trace |

| Translucent objects (glass, smoke, water, veils) | Foreground and background literally mix in the same pixel |

| Color similarity between subject and background | A blonde child against a beige wall has no contrast to lock onto |

| Motion blur and bokeh | Edges are inherently soft; a binary mask can’t represent them |

This is exactly why we treat matting as a regression problem over alpha, not a classification problem. Our team has published several papers in this area — for example TIMI-Net (ICCV 2021) and PSG (ICASSP 2023) — and a lot of those research ideas now run inside the production Cutout.Pro models.

How modern AI cutout works

A modern AI cutout system roughly does this:

- Encoder. A deep CNN or ViT backbone (e.g. a U²-Net / SegFormer / SAM-style encoder) extracts multi-scale features from the input image. These features capture both what is in the image and where its boundaries lie.

- Decoder + matting head. A decoder upsamples those features to full resolution. Crucially, instead of predicting a hard mask, it predicts a continuous alpha matte — typically with a refinement branch that focuses on uncertain “trimap” regions like hair edges.

- Edge / detail refinement. Many production systems run a second small network specifically over the boundary band to recover fine details, which is the difference between “okay-ish” and “Photoshop-worthy” results.

- Compositing. The final RGB-A image is exported as a transparent PNG, ready to be dropped onto any new background.

What changed in the last 2–3 years isn’t the high-level recipe — it’s the data. Models are now trained on tens of millions of high-quality matting samples, often with synthetic compositing to teach the network exactly what hair-on-cluttered-background should look like. That’s why a free tool can now match what a senior retoucher used to bill hourly for.

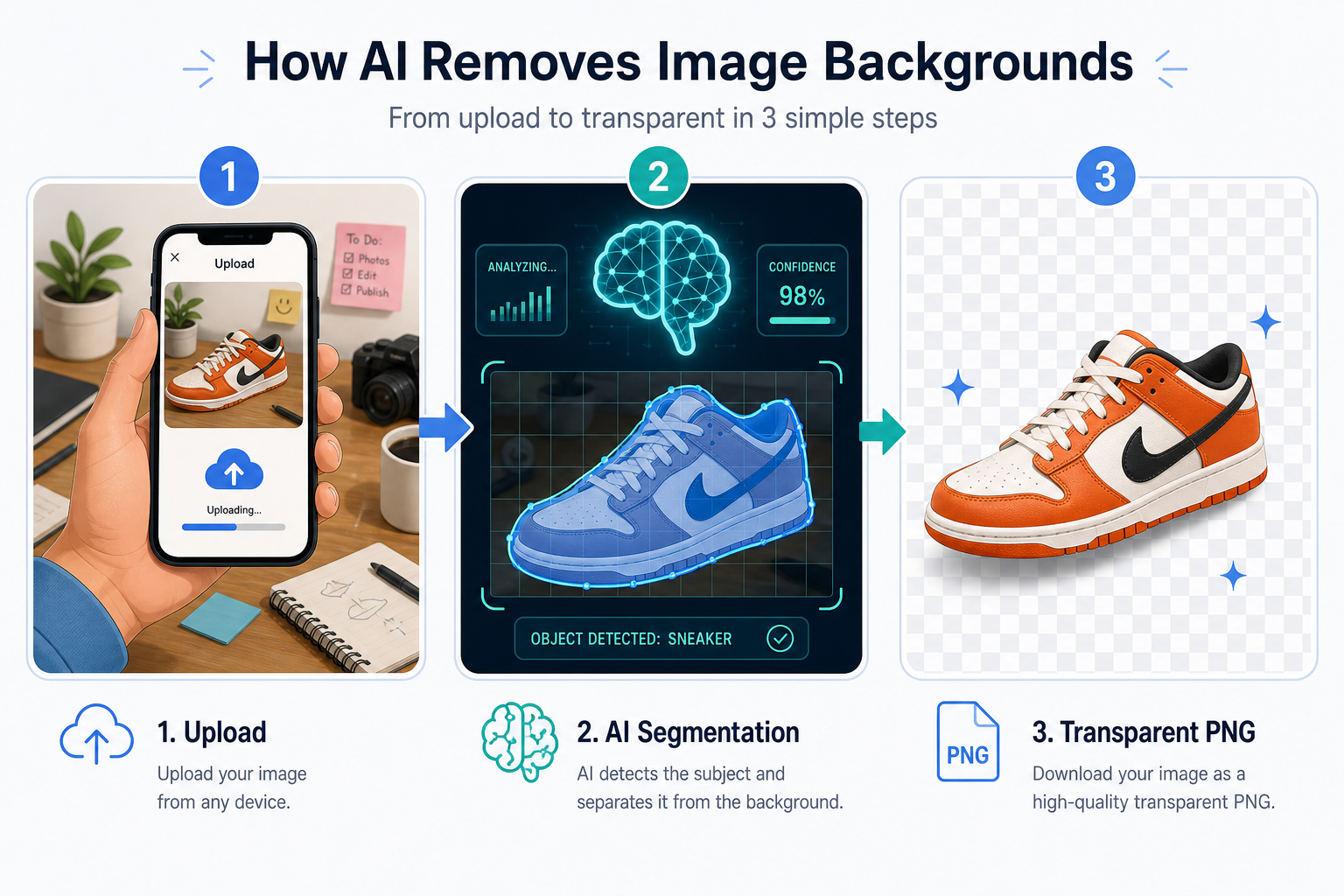

Step-by-step: removing a background with Cutout.Pro

Here is the workflow I use almost every day. Total time: under 60 seconds.

1. Open the tool

Go to cutout.pro/remove-background. No sign-up required for low-resolution downloads.

2. Upload your image

Drag and drop, paste a URL, or Ctrl+V an image straight from your clipboard. Cutout.Pro handles JPG, PNG and WEBP. It supports batch upload if you have a folder of product photos for an e-commerce store.

3. Let the AI do its thing

You don’t paint masks, pick colors, or tweak feather radii. The model runs in roughly 1–3 seconds and returns the transparent PNG. Edge quality on hair and fur is the part I’d most encourage you to inspect — if it nails that, the rest is easy.

4. (Optional) Edit and refine

Click Edit / Erase & Restore if you want to:

- Manually erase any leftover bits the model wasn’t 100% sure about.

- Restore a region the model removed too aggressively.

- Add a shadow or drop-shadow for a more realistic composite.

- Replace the background with a solid color, a gradient, or an AI-generated scene via Background Diffusion.

5. Download

Hit Download. Free output is medium resolution; HD/4K downloads use credits. If you need to process thousands of images, the Cutout.Pro API is the way to go — that’s what e-commerce teams use to keep product catalogs consistent.

Going deeper: if you’re a developer building your own app, the same models are available as a REST API, plus a desktop app for batch processing and a Shopify plugin. For full-blown image generation and editing (Nano Banana, Flux Krea, Seedream, Sora 2.0, GPT Image), the same team runs cutout.tech — their unified API platform.

Pro tips for better cutouts

A few practical things I’ve learned both from research and from looking at thousands of user-submitted images:

- Light your subject, not the background. Even a phone flash bouncing off a wall makes the subject pop and helps the model lock onto edges.

- Avoid color matches. A white shirt on a white wall is the matting model’s nightmare. Move 30 cm to a different background and you’ll get a noticeably better cutout.

- For hair: shoot wider. Tight crops where strands run off the frame force the model to extrapolate. A bit of headroom helps.

- Don’t pre-compress. Heavy JPEG artifacts confuse edge detection. If you can, upload the original.

- Use HD output for print. The default download is fine for web, but printing or large e-commerce hero images benefit from HD/4K. The detail recovery branch produces noticeably cleaner edges at higher resolutions.

- Batch first, refine later. For e-commerce, run the full catalog through automatic cutout, then only manually touch up the 5% of edge cases. This is the workflow I see most pro studios converging on.

Common use cases

- E-commerce product shots — consistent white backgrounds across thousands of SKUs.

- Print-on-demand — isolating logos, faces and characters for mugs, t-shirts and posters.

- Marketing & social — moving the same subject across multiple campaign backgrounds without a re-shoot.

- Passport / visa photos — tools like the Cutout.Pro Passport Photo Maker handle compliance with country-specific rules automatically.

- Video content — the same matting tech extends to the temporal domain via video background removal, which kills the need for a green screen for most YouTube and TikTok creators.

- AI-generated content — once you have a clean cutout, you can drop the subject into a diffusion-generated scene using Background Diffusion.

FAQ

Is the AI cutout really free? Yes. Standard-resolution exports are free; HD/4K downloads and API quota use credits. Non-profits get free credits — see the Cutout.Pro non-profit page.

Will it work on transparent objects (glass, water, smoke)? Mostly yes — that’s the whole point of matting vs. segmentation. The alpha channel is continuous, so glass edges and water spray come through with sub-pixel transparency. Heavy motion blur is still a hard case.

What about privacy? Uploaded images are deleted within an hour. If you have stricter requirements, the self-hosted desktop app keeps everything on your own machine.

Can I batch-process thousands of images? Yes — either via the desktop app or the API. The API is what most e-commerce platforms integrate.

TL;DR

- “Cutout” = image matting: predicting a continuous α-matte per pixel, not a binary mask.

- Modern systems are an encoder + decoder + boundary-refinement head, trained on tens of millions of synthetic composites.

- For 99% of users, Cutout.Pro’s free background remover is the fastest way to get a high-quality transparent PNG. For developers, the API and Shopify plugin handle scale.

- If you want to dig into the research, my matting publications and the LibAI Lab team page are good starting points.

If this post helped, please star the repo on GitHub — and feel free to reach me at jxie@picup.ai for research collaborations or product feedback.

Next up: a deep-dive on plant identification and disease detection with heysproutly — same computer-vision toolkit, very different domain.